Imagine bringing home a single robot to be your all-in-one kitchen assistant—you want it to brew your morning Gongfu tea, make fresh juice in the afternoon, and mix the perfect cocktail at night. While it might have been trained extensively in a lab, in your house, the counter is slightly higher, the fruit is shaped differently, and your cocktail shaker is transparent. Pre-trained Vision-Language-Action (VLA) models provide an incredible starting point, yet real-world deployment is never a fixed test distribution. This leaves a critical, unsolved challenge: how do we take the heterogeneous experience generated across a fleet of robots and use it to post-train a single, generalist model across a wide range of tasks simultaneously?

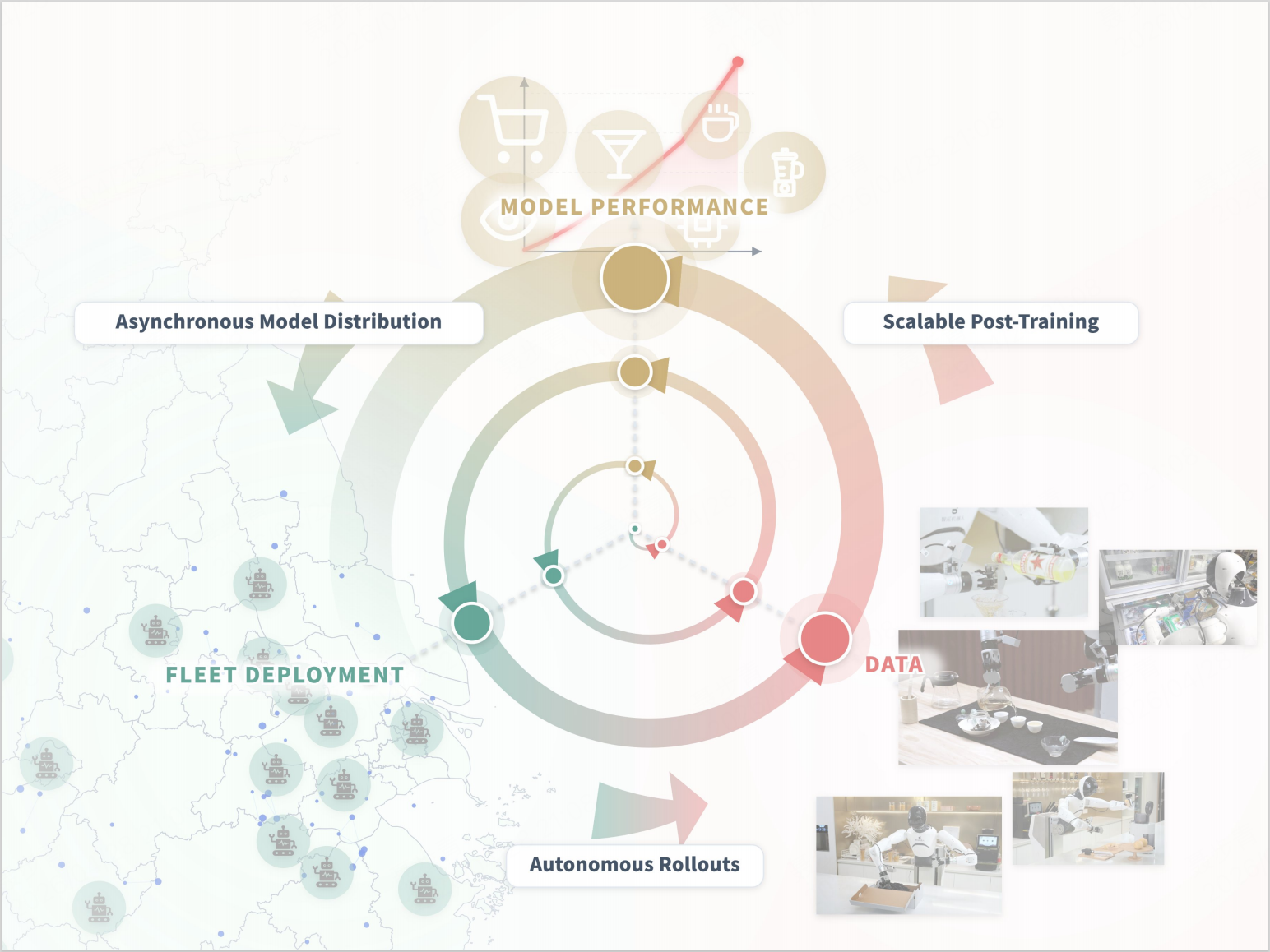

We present Learning While Deploying (LWD), a fleet-scale offline-to-online RL framework for continual post-training of generalist VLA policies. Instead of treating deployment as the finish line where a policy is merely evaluated, LWD turns it into a training loop through which the policy improves. A pre-trained policy is deployed across a robot fleet, and both autonomous rollouts and human interventions are aggregated into a shared replay buffer for offline and online updates. The updated policy is then redeployed, enabling continuous improvement by leveraging interaction data from the entire fleet.

A Generalist Learns Beyond Demonstrations

Some robot learning systems have explored data flywheels: deploying a policy, collecting new robot data, extracting high-quality behaviors, and training the next policy to imitate them. While this supports scalable improvement, it still treats deployment mainly as a source of expert demonstrations. Prior post-training systems mainly focus on specialist policies, leaving fleet-scale post-training of a single generalist policy across diverse tasks unresolved.

Real robot deployment produces more than good actions to copy. Successful executions, failed attempts, partial progress, failure recoveries, and human interventions are all we can use. This is where LWD differs from existing imitation-based policy updates. Instead of filtering deployment experience into a smaller set of high-quality demonstrations, LWD learns from the entire spectrum of robot experience. The key is that LWD does not rely on imitation learning, it uses offline-to-online reinforcement learning to convert heterogeneous robot experience into policy extraction.

An Offline-to-Online RL Data Flywheel

LWD drives self-improvement during real-world robot deployment through a closed-loop data flywheel. It begins with a pretrained VLA policy. The policy has broad competence from large-scale robot data, but it is not assumed to be deployment-ready. LWD first performs offline RL initialization using previously collected robot data, including expert demonstrations, historical rollouts, and exploratory data around failure modes. This gives the policy and critic a stable starting point before online deployment.

Then the online stage begins. The current policy is deployed across a fleet of robots. Each robot executes real-world tasks and uploads trajectories into a shared online replay buffer. Some trajectories succeed. Some fail. Some include human interventions. The centralized learner trains on a mixture of the static offline buffer and the growing online buffer, then periodically pushes improved checkpoints back to the fleet.

This creates a closed-loop RL data flywheel:

LWD-based data flywheel

The important distinction is that LWD uses unified reinforcement learning method across offline and online stages. Offline data provides a stable foundation. Online data exposes the policy to real deployment distribution. Both are used by the same learner to improve a single generalist policy, leading to higher training stability. In this sense, LWD is not just a deployment data flywheel. It is an offline-to-online RL data flywheel.

Challenges of Fleet-Scale RL for generalist policy

Learning from deployment sounds natural, but applying RL to a generalist robot fleet is difficult. Fleet replay is highly heterogeneous, mixing tasks with different instructions, horizons, reward sparsity, success frequencies, and degrees of human intervention. Stable value learning is required to extract useful improvement signals from sparse and delayed rewards without overfitting to transient online data. Besides, modern VLA policies often use generative action heads such as flow-matching. These policies generate actions through a multi-step denoising process, making likelihood-based policy extraction or direct policy-gradient training difficult to apply.

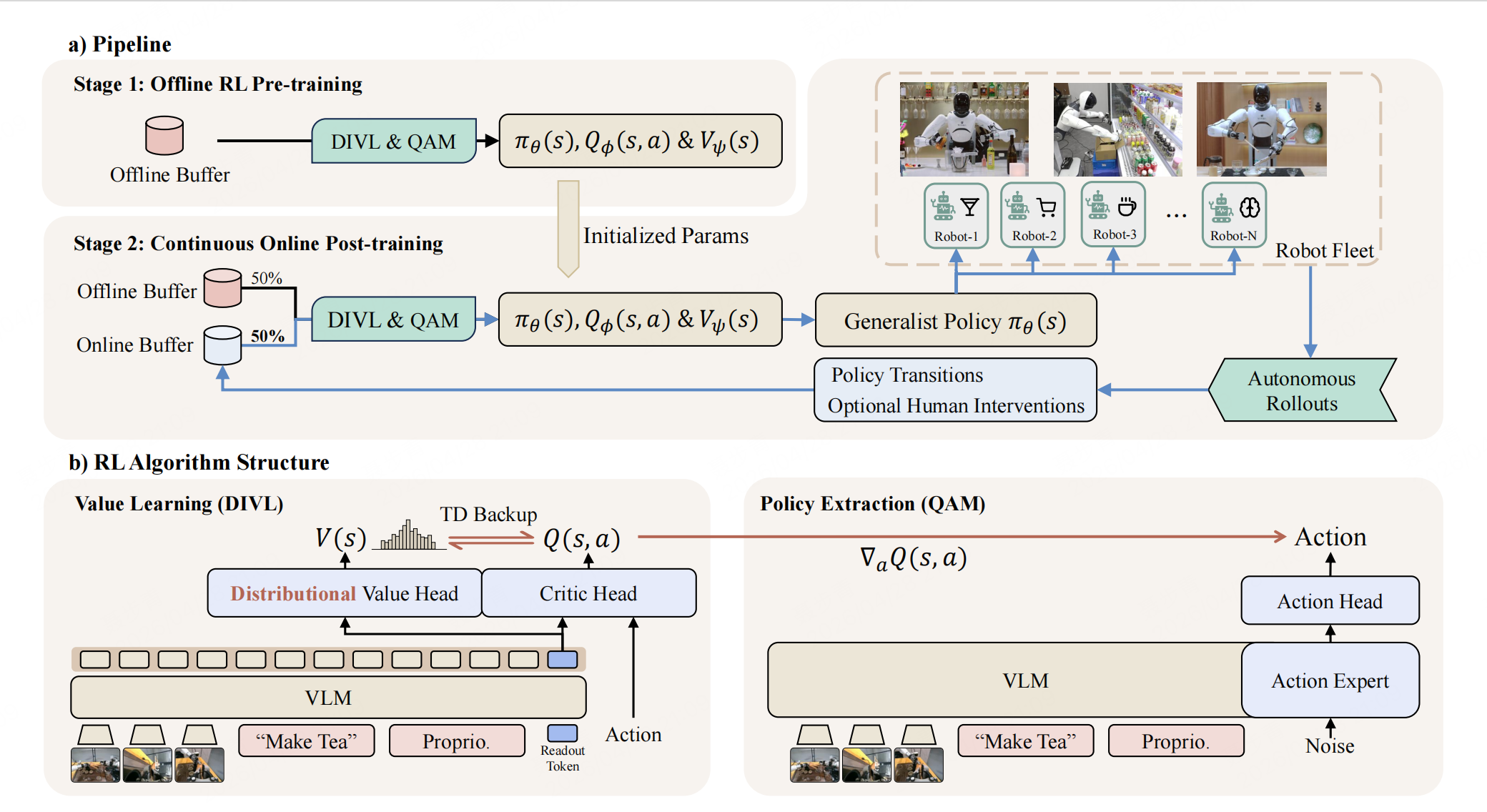

LWD addresses these challenges with two components: Distributional Implicit Value Learning (DIVL) for value learning, and Q-learning with Adjoint Matching (QAM) for policy extraction.

Algorithm structure of LWD

Distributional Implicit Value Learning

To learn from deployment experience, the system must decide which actions contributed to success, which led to failure, and how early decisions affected outcomes much later. This is crucial for long-horizon tasks such as brewing Gongfu tea, where a late-stage failure may originate from an earlier poor grasp or unstable placement.

In LWD, this problem is further complicated . The learner trains on a continually changing mixture of demonstrations, historical rollouts, online attempts, interventions, successes, and failures. Given sparse rewards, delayed feedback, and shifting data distributions, directly fitting a scalar value target becomes unstable and difficult to scale.

LWD addresses this with Distributional Implicit Value Learning (DIVL) for its scalability. Instead of regressing a single scalar value, DIVL learns a distribution over dataset action-values and extracts a quantile statistic as the temporal-difference bootstrap target. This preserves the in-distribution value-learning principle of IQL, while capturing variability across heterogeneous replay, and reducing overestimation from out-of-distribution maximization. For long-horizon tasks, LWD further uses multi-step TD targets to propagate sparse terminal rewards more efficiently through long episodes.

Policy Extraction With QAM

A flow-based policy decides actions through a multi-step generative process. Classic likelihood-based RL algorithms struggle to apply: action likelihoods are hard to compute, and direct critic-gradient optimization requires unstable multi-step backpropagation through the flow process.

To address this challenge, LWD utilizes Q-learning with Adjoint Matching (QAM) for policy extraction. QAM uses the critic gradient to guide policy extraction for flow-based action models. It reformulates critic-guided optimization as local regression along the flow trajectory, avoiding unstable backpropagation through the full generative process and removing the need for tractable action likelihoods.

Above all, DIVL and QAM decouple policy evaluation from policy extraction: DIVL learns value estimates from heterogeneous offline-online replay, while QAM converts these estimates into stable updates for the flow-based policy.

Generalist Policy for Multiple Real World Tasks

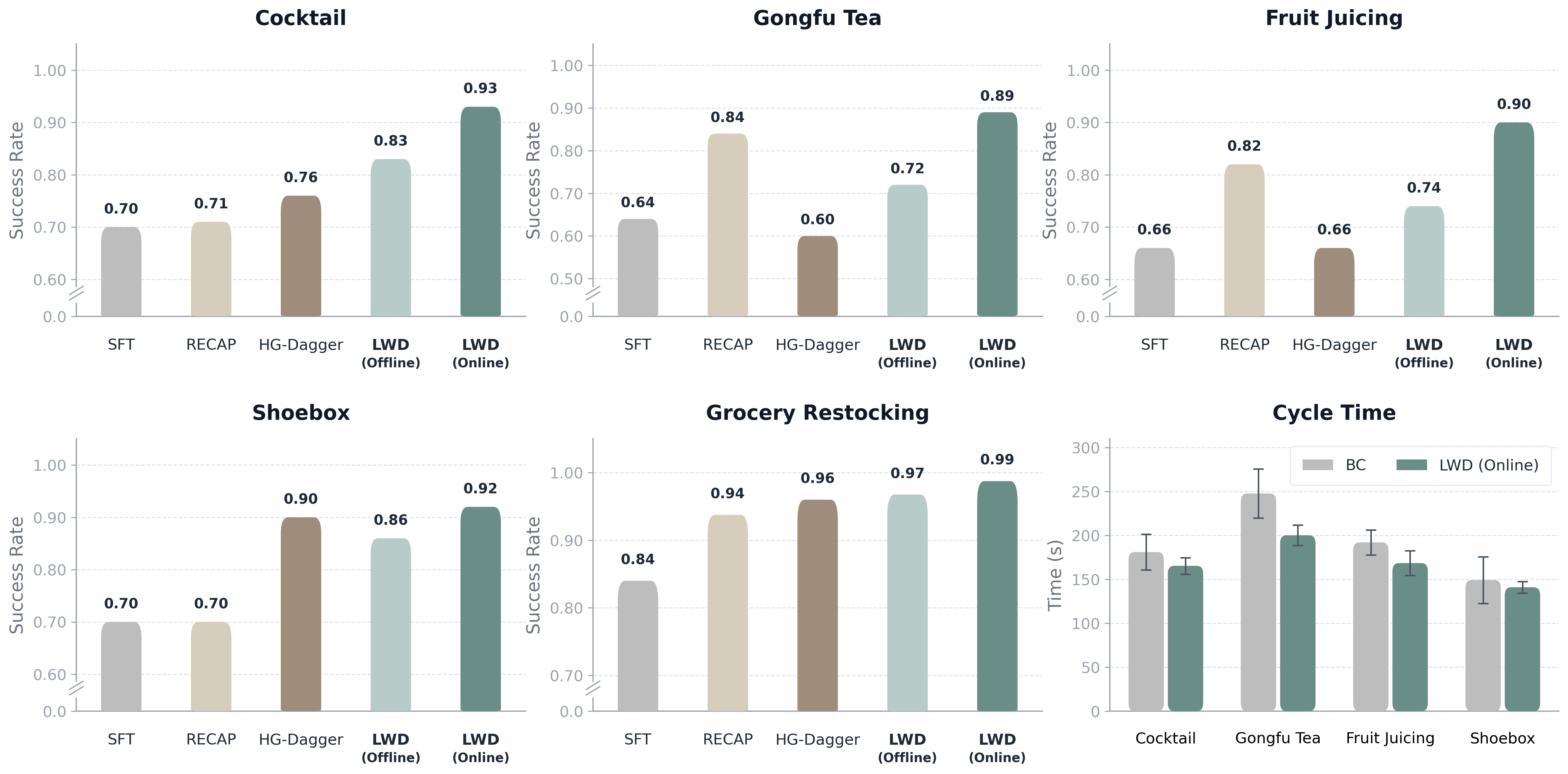

We evaluate LWD on a fleet of Agibot G1 dual-arm robots across eight real-world manipulation tasks, spanning long-horizon tasks (3-5 minutes) and grocery restocking. All experiment videos are shown at 1x speed

-

Learning while Deploying

-

Brew Gongfu Tea

-

Make Cocktail

-

Make Fruit Juice

-

Pack Shoes

-

Grocery

These tasks are substantially more challenging than short-horizon tabletop manipulation. Each episode lasts several minutes and requires a sequence of tightly coupled subtasks: measuring and mixing ingredients, pouring liquids, cutting and transferring fruit, manipulating containers, operating tools, packing deformable objects, and recovering from intermediate mistakes. A small error early in the episode can affect success much later, making credit assignment especially difficult.

LWD trains a single generalist policy across all tasks rather than one specialist controller per task. As online fleet experience accumulates, the policy improves most clearly on these long-horizon tasks, where deployment naturally produces the kinds of data imitation learning struggles to use: failed attempts, partial progress, retries, recoveries, and human interventions.

Experiment results on success rate and cycle time

The results show that LWD consistently improves success rate over prior post-training baselines, especially for long-horizon tasks. In addition, LWD reduces mean cycle time on long-horizon tasks. This indicates that LWD enhances efficiency of solutions through offline-to-online post-training.

Toward Large-Scale Deployment

The broader implication of LWD points beyond a single system. As robot fleets scale to more homes, stores, factories, and everyday environments, deployment will generate a new kind of training data: large-scale, heterogeneous, and grounded in real-world interaction. Such experience is difficult to pre-collect in a fixed offline dataset, but it naturally emerges when robots operate at scale.

In future, large-scale deployment becomes part of the training pipeline for generalist robot policies. Offline pretraining provides the foundation, while continual offline-to-online learning turns real-world fleet experience into policy improvement. LWD is a step toward this paradigm: training generalist robots not only from what humans demonstrate, but from everything robot fleets experience in the real world.